AVGO + VMW - Value In The Rough - Part 4

AVGO Future & Competition

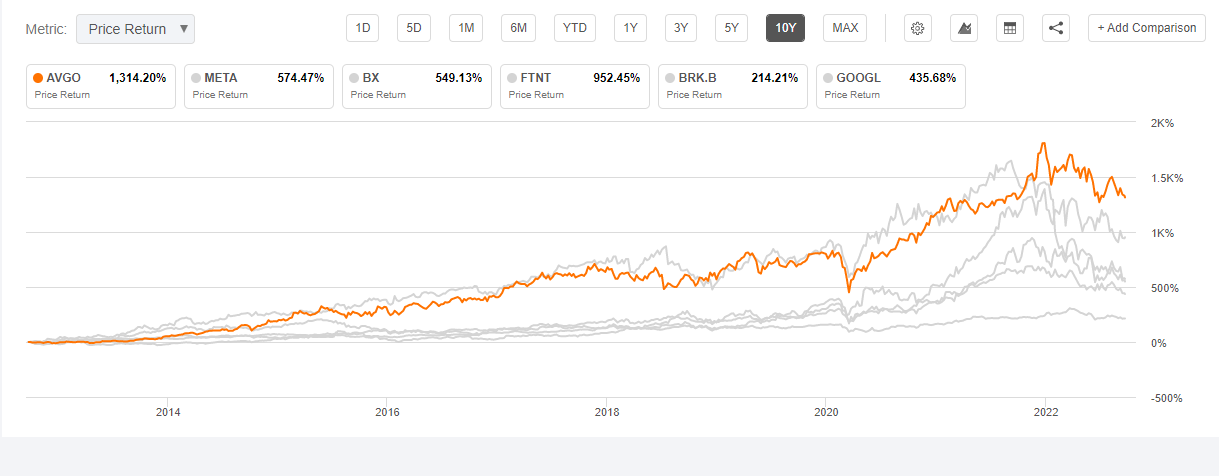

As we've outlined earlier, AVGO has a successful track record of finding valuable acquisition targets and growing shareholder value. This is supercharged by a favourable capital structure akin to BRK or PEs with permanent capital.

To conduct the valuation analysis we need to have a good sense of the future expectations for AVGO. The most important factor is probably the leadership and talent as they will continue to define AVGO's M&A and product expansion strategy.

Rare Leadership

AVGO is a company with so many financially-driven acquisitions and many characteristics reminiscent of legacy tech. It is very easy for an analyst to apply the traditional legacy tech bias against AVGO. However, we believe Hock Tan, the mastermind behind AVGO, is probably among the rarest managers that may eventually be considered a legend of the fabless industry, alongside Jensen Huang of NVDA, and Pat Gelsinger of INTC. Hock Tan is not the typical founder with huge equity ownership, but he has been instrumental in AVGO's success right from the start when the company was created in 2006.

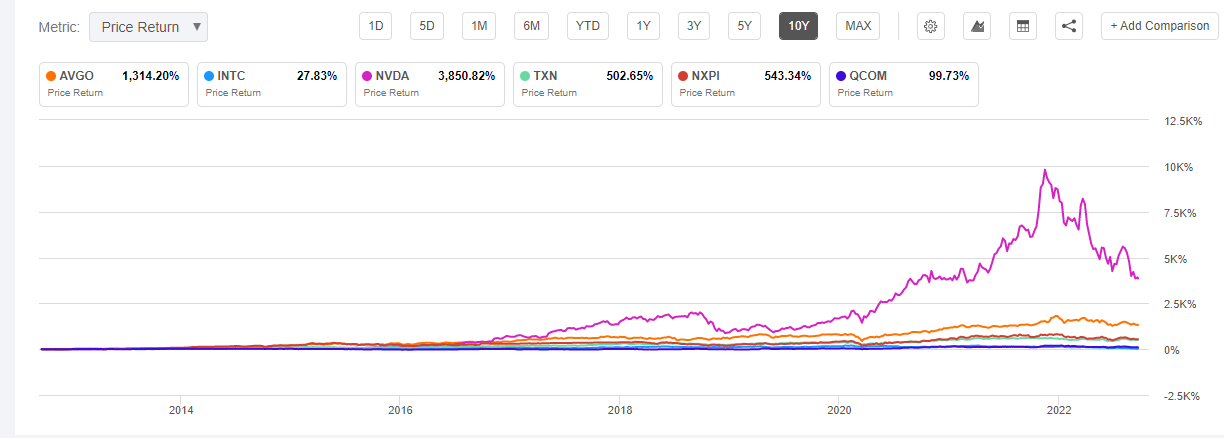

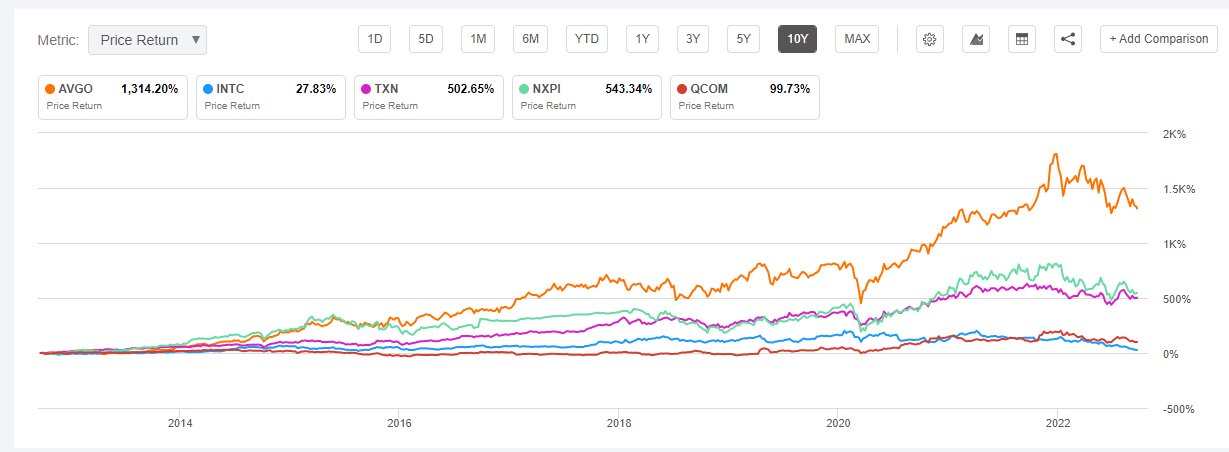

Hock Tan's leadership at AVGO is nothing sort of epic. AVGO has delivered ~14x return to shareholders in the past decade despite the recent selloff. Among the top 6 fabless vendors, this is only behind NVDA's stellar ~39x return. In comparison, INTC (~1.4x), TXN (6x), NXPI (6.4x), and QCOM (2x) are all in scales of magnitude behind AVGO and NVDA. Not surprisingly NVDA is the only one with total founder control while AVGO has semi-founder control.

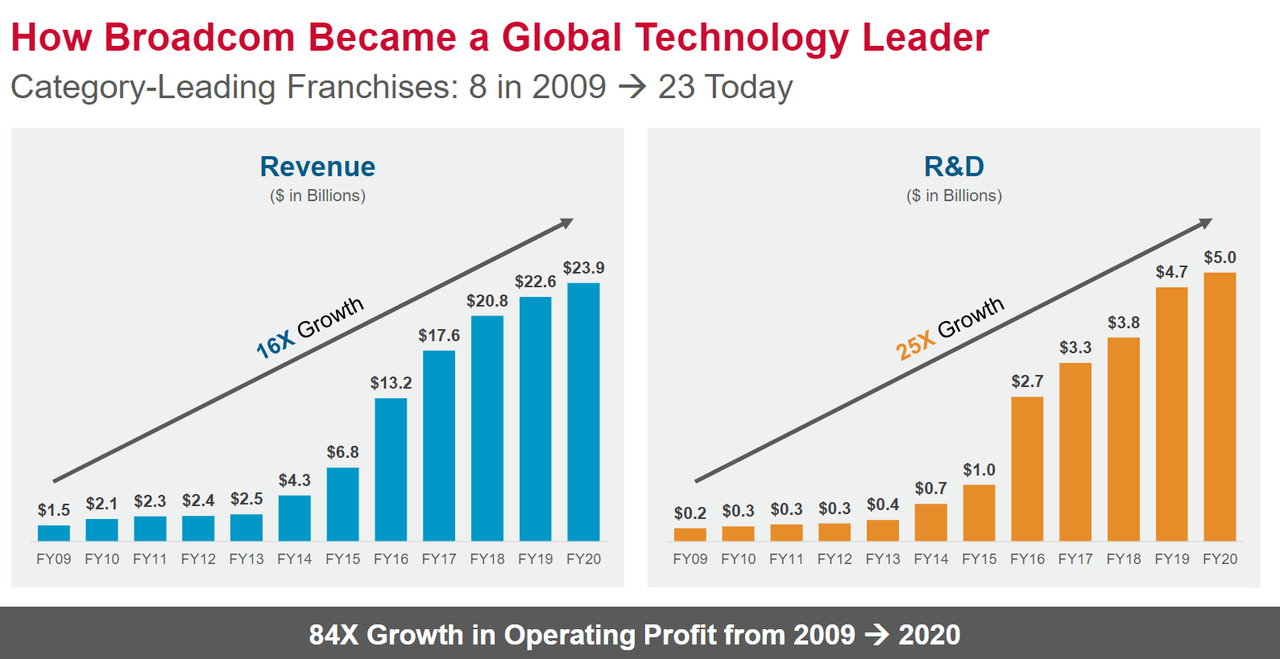

NVDA's success emerged from Jensen Huang's decade-long bet on CUDA (Compute Unified Device Architecture), accelerating compute, and ML/AI. AVGO's success is predicated on more of a blend of technology and business as Hock Tan has a unique mix of engineering and business background. From 2009 to 2020, AVGO delivered 16x in revenue, 25x in R&D budget, and 84x in operating profit. The biggest driver of these results is its strong focus on being the category leader in each field it acquired.

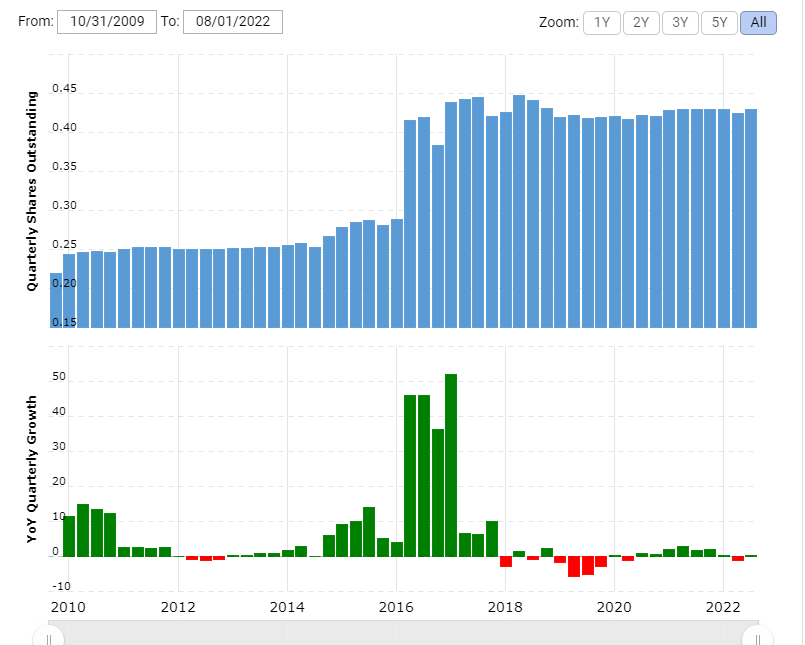

Unlike CSCO, and many other legacy tech giants controlled by professional managers, AVGO is able to expand the category leadership from 8 to 23. This allows AVGO to compound and create value out of every business that it acquired. Even better than this is the end shareholder return that is not diluted by the M&A (except for the AVGO and Broadcom merger that resulted in a ramp up in share count). Hock Tan is able to leverage the existing cash flow machines to buy more great businesses at low prices, and grow them efficiently.

Hock Tan of AVGO, Jensen Huang of NVDA, and Pat Gelsinger of INTC - these three executives share similar traits:

- Bold technical vision in technology roadmaps that few believed in the beginning, but transpired to be the future.

- Sharp business acumen on the future of the industry, the ecosystem, and business profitability.

- Perseverance in execution to ensure long-term visions come to fruition.

But it appears that these three executives are once again being challenged. NVDA is facing another cyclical headwind. AVGO continues to be misunderstood by investors in general. And INTC is facing a dark moment as investors begin to doubt Pat Gelsinger's ability to turnaround INTC. However, excellent leadership eventually prevails over the long-term, so investors should have some more patience yet.

Business Outlook

At a high level, there are a few factors we that make us bullish on AVGO:

- The management is savvy with right sense of ownership, and possess an aggressiveness unseen from legacy tech giant managers.

- The semiconductor industry's overarching theme is consolidation, and AVGO has been leading here for many years.

- Execution is very strong and the track record is underappreciated.

These will continue to be the long-term fundamental drivers of AVGO's success.

On specific product lines, let's go through individual items.

Consumer networking for AVGO will continue to grow at low teens due to the iPhone and demand for the latest standards like Wi-Fi 7. AVGO is underforming relative to QCOM's Atheros, which has had great success in recent years due to bundling with OEMs. However, AVGO's products remain strong, especially in high performance Apple devices. Furthermore, the incoming world of IoT and 5G bringing expanded connectivity, will further benefit AVGO's product ASPs (Average Selling Price), volumes, and refreshments. This is a huge market big enough to serve AVGO and QCOM together. Although we are slightly more bullish on the latter in this field, we shall expect AVGO to maintain the current position.

Enterprise networking is AVGO's biggest growth driver. It has clear leadership with ~70% market share. INTC ruined Barefoot, which has a nice software layer combined with a DSA (Domain Specific Architecture) chip. Luckily for INTC, the Barefoot founder stayed during the management chaos (from c. 2018 to 2020) and was then appointed by Gelsinger as the head of NEX. INTC admitted that they are behind AVGO by 1-2 years, and they have recently opened up the IFS to manufacture Silicon One, the switch ASIC for CSCO. MRVL (Marvell Technology) and NVDA also have great switch ASIC but they also generally lag AVGO by 1-2 years, with way less OEM and ecosystem support as well.

AVGO's comprehensive offerings with fast lead times to reach the latest generation of technology, varying degrees of programmability and performance, together with the most mature software and OEM partnership, will continue to be its strongest moats.

AVGO is the preferred vendor for customers to work with.

NVDA is probably the most important competitor in this space at the moment. In recent years, NVDA's great success in GPGPU made it the winner of enterprise and HPC (High Performance Compute) DC. To scale the GPGPU out to hundreds of machines, NVDA built a new high-performance networking architecture to parallelise the GPGPU, not only at machine level but also at pod and cluster levels. This requires high performance switch and NIC (Network Interface Card), which is why NVDA acquired Mellanox in 2019 (out bidding INTC also).

NVDA's DPU (Data Processing Unit) + Mellanox + NVDA's GPGPU + NVDA's recently announced CPU is a powerful mix. If ML/AI is going to be the future then GPGPU is at the centre of the compute instead of CPU. Inside the server box, NVDA's CPU will do pre-processing for GPGPU, and its DPU will do networking and security offloading for the GPGPU. Then NVDA's switch will connect each server together with high bandwidth and allow the user to treat thousands of servers as one machine.

However, NVDA has a notorious brand image within the industry, which is 180 degree different to AVGO's. From time to time, NVDA has screwed many partners for its own favour. In many ways, this looks like AAPL's love-and-hate relationship with ecosystem partners. On the one hand, NVDA's product has strong performance, great vision, and great user experience. On the other hand, enterprises working with NVDA, using its chip to build their product and infrastructure means they are paying 2x+ compared to alternative solutions. And NVDA won't be actively helping them during times of crisis or when enterprises encounter product issues (e.g., MSFT's Xbox 360).

AVGO on the other hand, has IT switzerland status. It doesn't have a monopoly over CPU or SoC (System-on-Chip) like INTC or QCOM does. It doesn't screw customers and distances itself with customers. And more importantly, many AVGO customers have a partnership mentality.

In the SDN revolution, AVGO supplied chips to startups like ANET and collaborated with them in building the networking OS stack to slay down the dragon CSCO. AVGO was also instrumental in helping hyperscalers like AWS and Meta to build their own proprietary hardware and software networking stack, and help them differentiate it as part of their competitive edge.

As a result, AVGO is the go-to vendor when customers - often the cutting-edge startups and growing tech companies - want to avoid vendor lock-in or experiment with new architectures to deliver profoundly better performance/cost.

It is different this time. Upon the surge of NVDA's status amid rising DC spending, AVGO has been asked more frequently by customers to provide networking chips so that they have an alternative to NVDA and can avoid vendor lock-in. AVGO's great relationship with hyperscalers, hardware makers, software vendors, and many cutting-edge innovators, is a strong value proposition to customers even though NVDA has a more powerful product and more cost-competitive bundles enabled by its GPGPU monopoly.

We believe AVGO will continue to be the preferred vendor to work with, especially in high performance networking and accelerators. The demand for avoiding vendor lock-in, keeping optionality, and sustaining innovation, will push AVGO into a strong competitive position for future growth.

AVGO is one of the lesser-known winners among fabless vendors which keep an eye on turnkey solutions for hardware makers.

Every company is becoming a software company. The critical success for many fabless vendors in the future will depend on their ability to innovate and co-integrate the software with hardware.

If you look at the success of QCOM, NVDA, and AVGO against peers that have similar levels of R&D and engineering capacity, it is clear that they win not only because of sheer technology innovation. These three, and many other successful fabless vendors, gained an edge via providing SDKs (Software Development Kit), API support, and hardware reference designs to end users and ecosystem partners.

For example, with comprehensive SDKs, an app developer is no longer concerned in how to optimise the software for one particular chip. Hence, the developer can focus on delivering the upper stack innovation at a faster pace. With hardware reference design, hardware makers that procure the chip don't need to have a team of talented engineers to design the hardware from the ground up. They can just port the reference design, and make tweaks according to their unique specifications, and ship the product in weeks or months, instead of years.

From this we can see that AVGO is focusing on the architectural, big picture, kind of value they can give to hardware customers. Delivering massive amounts of value to customers, by not only making chips for customers, but making sure chip users and system developers can use the chip to do their job better.

QCOM is repeating this success in cars, NVDA is repeating this success in DPU, and AVGO is continuing the playbook in networking while eyeing something greater.

DSA, Networking Compiler, AI Compiler, and Accelerator Compiler

As we've noted in previous reports, DSA (Domain Specific Architecture) is the chip design of the future. The basic idea is that, in order to keep Moore's Law intact, apart from the normal transistor shrinkage scaling (0.7x per year) and design tweaks to provide incremental gains, there are tremendous amounts of opportunity to develop a specialised accelerator (similiar to ASIC) but catering to a specific domain instead of one particular application (hence a wider scope vs ASIC).

For example, an AV1 video decoder ASIC could deliver 100x performance gain versus a generic CPU. However, it can only decode video in AV1 format. Instead, a DSA accelerator can decode all sorts of video (and audio) format at 50x versus CPU. Then the media engine can handle all the compute related to media, and providing a great tradeoff between function specific acceleration and general usability.

DSA is the future but it requires enormous effort to bring it to reality. The traditional way of how software is developed is based on a monolithic CPU world where the developer doesn't need to care about how to optimise the software execution to one particular ASIC or DSA chip. Therefore, a middleware, or an orchestration layer, is required to help route the software execution to the correct part of the DSA. For example, when you are recording a clip of a TikTok video, you want the camera recording to be processed by the DSP (Data Signal Processor), the video encoding to be done by the media engine, the AI to be done by the NPU (Neural Processing Unit), and other functions to be done by the CPU. This complex routing and optimisations are a big headache to developers.

Unlike the CPU, which is scalar, serialised-based execution, these accelerators have vastly different coding logic and require completely new sets of skills for developers to build software efficiently.

NVDA's deepest moat is its CUDA software. This makes coding and ML software development over its GPGPU super easy. Developers can write normal C code like they do when developing apps on CPU, and NVDA's CUDA plus compiler suite will be responsible for translating these codes into massively parallelised machine code with tons of unknown tweaks to make it super efficient.

This is the same in the world of enterprise networking, whereby networking functions are becoming more complex and versatile as hyperscalers are competing fiercely for better efficiency and differentiation.

AI requires a compiler, and so does SDN, as according to the Barefoot Networks founder and now head of INTC's NEX division:

You’ve got the Xeon servers, you’ve got the IPU (Convequity: Intelligence Processing Unit, INTC's term for DPU) connected to it, and you’ve got a series of switches. And then you’ve got another IPU at the other end and a Xeon server. Just think about that whole pipeline, which now becomes programmable in its behavior. So if I want to turn that pipeline into a congestion control algorithm that I came up with, which is suits me and my customers better than anything that has been deployed in fixed function hardware, I can now program it to do it. If I want to do something – installing firewalls, gateways, encapsulations, network virtualization, whatever – in that pipeline, I can program it, whether I’m doing it in the kernel with eBPF or in userspace with DPDK. I can write a description at a high level of what that entire pipeline is, and I don’t care really which parts go into the kernel or into the user space, or into IPU or into the switch, because it can actually be partitioned and compiled down into that entire pipeline now that we’ve got it all to be programmable in the same way.

So I’m dreaming here. I think that this is an inevitability, where it will go with or without my help. In fact, I think at this point, we’re down that path. But this is a path that we’re very committed to in order to enable that through an open ecosystem. Of course, I want those elements to all to be Intel. But still we want an ecosystem that you allows you to do this in a vendor-agnostic way.

Programming The Network With Intel NEX Chief Nick McKeown

Along this route it's conceivable to think that the number of vendors may shrink and existing vendors may be disrupted by innovators. Again, we are reassured, and somwhat surprised, to see that AVGO is the leader along this path. AVGO is making unified sets of APIs for its networking chips with varying degrees of programmability and fixed-function acceleration. This opens up a huge space of imagination. Networking chips could offload a bunch of functions including firewalls, gateways, encapsulations, and many more. Hyperscalers and cloud developers could leverage the agility of the cloud while also having ASIC-like tight hardware and software integration to deliver big incremental performance efficiency gains. Imagine if PANW is able to run Prisma SASE on GCP and on AVGO's Jericho (complex logics) and Tomahawk (high speed routing), which has c. 80% of performance/cost vs FTNT's tightly coupled hardware/software while preserving the 100% agility, speed of innovation, and scalability offered by the cloud?

AVGO's huge potential doesn't stop here. As networking is more programmable, tons of applications could actually run on networking accelerators instead of the CPU. AVGO is actively developing AI and Media accelerator chips that could be developed to easily communicate with its networking chips. What this means is that 80% of workloads don't even need to go through the CPU, because they could be handled by accelerators with 10x+ efficiency gains and faster speeds thanks to residing on the networking layer. This is far into the future, however, as this software stack is even harder to develop and promote than NVDA's CUDA, which took more than two decades to grow and make meaningful contributions to its financial performance. Notwithstanding, it gives us assurance that AVGO's vision is as sharp as the cutting-edge startups, with the added benefit of being an established and M&A focused tech giant.

Infrastructure Software

Infrastructure software is another future avenue for enterprise infrastructure. As the underlying hardware components are becoming commoditised, the major value shifts to upper management software or infrastructure software that resides between the hardware and the actual workload.

This is the biggest piece of the puzzle that AVGO is looking to expand into. VMW is the only vendor that has a comprehensive set of software tools that handles the virtualisation and management of compute, storage, and networking, with clear leadership in each field.

The most telling indicator is that of NVDA's DOCA (Data Centre-on-a-Chip) partnership. DOCA is NVDA's next CUDA-like bet. NVDA proposed a dedicated DPU that is responsible for accelerating and offloading the virtualisation function and infrastructure-related processes. The value justification for this is that right now the hypervisor and workload (VM/container) are all running on the CPU. This introduces 40%-60% overhead as the CPU resource is consumed by virtualisation overhead instead of the actual workload. By offloading such infrastructure software functionality to a dedicated DSA chip, there is tremendous amount of performance gain possibilities. Unlike CUDA, however, DOCA's success is almost 80% contingent on VMW's willingness to partner with NVDA in bringing the NSX hypervisor to the DPU.